EMC 20% Unified Storage Guarantee !EXPOSED!

The latest update to this is included here in the Final Reprise! EMC 20% Unified Storage Guarantee: Final Reprise

For those of you who know me (and those who don’t, hi! Pleased to meet you!) I spent a lot of time at NetApp battling the storage efficiency game, always trying to justify where all of the storage space went in a capacity bound situation. However since joining EMC, all I would ever hear from the competition is how ‘space inefficient’ we were and frankly, I’m glad to see the release of the EMC Capacity Calculator to let you decide for yourself where your efficiency goes. Recently we announced this whole "Unified Storage Guarantee" and to be honest with you, I couldn’t believe what I was hearing. So I decided to take the marketing hype, set it on fire and start drilling down into the details, because that’s the way I roll. :)

I decided to generate two workload sets side by side to compare what you get when you use the Calculators

I have a set of requirements – ~131TB of File/Services data, and 4TB of Highly performing random IO SAN storage

There is an ‘advisory’ on the EMC guarantee that you have at least 20% SAN and 20% NAS in order to guarantee a 20% space efficiency over others – So I modified my configuration to include at least 20% of both SAN and NAS (But let me tell you, when I had it as just NAS.. It was just as pretty :))

Using NetApp’s Storage Efficiency Calculator I assigned the following data:

That seems pretty normal, nothing too out of the ordinary – I left all of the defaults otherwise as we all know that ‘cost per TB’ is relative depending upon any number of circumstances!

So, I click ‘Calculate’ and it generates this (beautiful) web page, check it out! – There is other data at the bottom which is ‘cut off’ due to my resolution, but I guarantee it was nothing more than marketing jibber jabber and contained no technical details.

So, taking a look at that – this is pretty sweet, it gives me a cool little tubular breakdown, tells me that to meet my requirements of 135TB I’ll require 197TB in my NetApp Configuration – that’s really cool, it’s very forthright and forth coming.

What’s even cooler is there are checkboxes I can uncheck in order to ‘equalize’ things so to speak. And considering that the EMC Guarantee is based upon Useable up front without enabling any features! Let me take this moment to establish some equality for a second.

All I’ve done is uncheck Thin Provisioning (EMC can do that too, but doesn’t require you to do that as part of the Guarantee, because we all know… some times… customers WON’T thin provision certain workloads, so I get it!) Also turning off deduplication, just so I get a good feel for how many spindles I’ll be eating up from a performance perspective – And turning off dev/test clone (which didn’t really make much difference since I had little DB in this configuration)

Now, through no effort of my own, the chart updated a little bit to report that NetApp now requires 387TB to manage the same workload a second ago required 197TB. That’s a little odd, but hey, what do I know.. This is just a calculator taking data and presenting it to me!

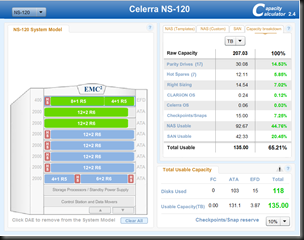

Now… with the very same details thrown into the EMC Capacity Calculator, lets take a look at how it looks.

According to this, I start with a Raw Capacity of ~207TB and through all of the ways as defined on screen, I end up with 135TB Total usable, with at least 20% SAN and about twice that in NAS – Looks fairly interesting, right?

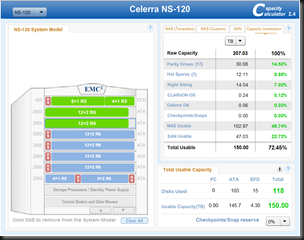

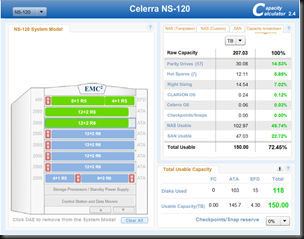

But lets take things one step further. Let’s scrap Snapshots on both sides of the fence. Throw caution in to the wind.. No snapshots.. What does that do to my capacity requirements for the same ~135TB Usable I was looking for in the original configurations.

On the NetApp side I reclaim 27TB of Useable space (to make it 360TB Raw)- while on the EMC side I reclaim 15TB of useable space [150TB Useable now] while Still 207TB Raw.

But we both know the value of having snapshots in these file-type data scenarios, so we’ll leave the snapshots enabled – and now it’s time to do some math – Help me as I go through this, and pardon any errors.

| Configuration | NetApp RAW | NetApp Useable | Raw v Useable % | EMC RAW | EMC Useable | Raw v Useable % | Difference | |

| FILE+DB | ||||||||

| Default Checkboxes | 197 TB | 135 TB | 68% | 207 TB | 135 TB | 65% | -3% | |

| Uncheck Thin/Dedup | 387 TB | 135 TB | 35% | 207 TB | 135 TB | 65% | +30% | |

| Uncheck Snaps | 369 TB | 135 TB | 36% | 207 TB | 150 TB | 72% | +36% |

However, just because I care (and I wanted to see what happened) I decided to say "Screw the EMC Guarantee" and threw all caution to the wind and decided to compare a pure-play SAN v SAN scenario, just to see how it’d look.

I swapped out the numbers to be Database Data, Email/Collaboration Data – The results don’t change (Eng Data seems to have a minor 7TB Difference.. Not sure why that is, – feel free to manipulate the numbers yourself though, it’s negligible)

And I got this rocking result! (Yay, right?!) 202TB seems to be my requirement with all the checkboxes checked! But this is Exchange and Sharepoint data (or notes.. I’m not judging what email/collab means ;))… I’m being honest and realistic with myself, so I’m not going to thin provision or Dedup it any way, so how does that change the picture?

It looks EXACTLY the same [as before]. Well, that’s cool, at least it is consistent, right?

However, doing the same thing on the EMC side of the house.

I want to note a few differences in this configuration – I upgraded to a 480 because I used exclusively 600GB FC drives as I’m not even going to lie to myself that I’m humoring my high IO workloads on 2TB SATA Disks – If you disagree you let me know, but I’m trying to keep it real :)

RAID5 is good enough with FC disks (If this was SATA I’d be doing best practice and assigning RAID6 as well, so keeping it true and honest) And it looks like this:

(Side Note: It looks like this SAN Calculation has only 1 hot spare declared instead of the 6 used above in the other configuration – I’m not sure why that is, but I’m not going to consider 5 disks as room for concern so far as my calculations go – it is not reflected in my % charts below – FYI! I fixed the issue and introduced 6 Spare disks. I also changed the system from 14+1 R5 sets to 4+1 and 8+1 R5 sets which seems to accurately reflect more production like workloads :))

Whoa, 200TB Raw Capacity to get me 135TB Usable? Whoa, now wait a second. (says the naysayers) You’re comparing RAID5 to RAID6 – that’s not a fair configuration because there is definitely going to be a discrepancy! And you have snapshots enabled too for this workload. (Side note: I do welcome you to compare RAID6 in this configuration, you’ll be surprised :))

I absolutely agree – so in the effort of equalization – I’m going to uncheck the Double Disk Failure Protection from the NetApp side (Against best practices, but effectively turning the NetApp configuration into a RAID4 config) and I’ll turn off Snapshot copies to be a fair sport.

There, it’s been done. The difference is.. That EMC RAW Capacity has stayed the same(200TB) while NetApp raw capacity has dropped considerably by 30TB from 387TB to 357TB. (I do like how it reports "Total Storage Savings – 0%" :))

So, what does all of this mean? Why do you keep taking screen caps, ahh!!

This gives you the opportunity to sit down, configure what it is YOU want, get a good feel for what configuration feels right to you and be open and honest with yourself and said configuration.

No matter how I try to swizzle it, I end up with EMC coming front and center on capacity utilization from RAW to Usable – Which down right devastates anything in comparison. I do want to qualify this though.

The ‘guarantee’ is that you’ll get 20% savings with both SAN and NAS. Apparently if I LIE to my configuration and say ‘Eh, I don’t care about that’ I still get OMG devastatingly positive results of capacity utilization. – So taking the two scenarios I tested in here and reviewing the math..

| Configuration | NetApp RAW | NetApp Useable | Raw v Useable % | EMC RAW | EMC Useable | Raw v Useable % | Difference | |

| FILE+DB | ||||||||

| Default Checkboxes | 197 TB | 135 TB | 68% | 207 TB | 135 TB | 65% | -3% | |

| Uncheck Thin/Dedup | 387 TB | 135 TB | 35% | 207 TB | 135 TB | 65% | +30% | |

| Uncheck Snaps | 369 TB | 135 TB | 36% | 207 TB | 150 TB | 72% | +36% | |

| EMAIL/Collab | ||||||||

| Default Checkboxes | 202 TB | 135 TB | 67% | 200 TB | 135 TB | 68% | +1% | |

| Uncheck Thin/Dedup | 387 TB | 135 TB | 35% | 200 TB | 135 TB | 68% | +33% | |

| Uncheck RAID6/Snaps | 357 TB | 135 TB | 38% | 200 TB | 151 TB | 76% | +38% |

When we’re discussing apples for apples – We seem to be meeting the guarantee whether NAS, SAN or Unified.

If we were to take things to another boundary, out the gate I get the capacity I require – If I slap Virtual Provisioning, Compression, FAST Cache, Auto-Tiering, Snapshots and a host of other benefits that the EMC Unified line brings to solve your business challenges… well, to be honest it looks like you’re coming out on top no matter what way you look at it!

I welcome you to ‘prove me wrong’ based upon my calculations here (I’m not sure how that’s possible because I simply entered data which you can clearly see, and pressed little calculate buttons… so if I’m doing some voodoo, I’d really love to know)

I also like to try to keep this as realistic as possible and we all know some people like their NAS only or SAN only configurations. The fact that the numbers in the calculations are hitting it out of the ballpark so to speak is absolutely astonishing to me! (Considering where I worked before I joined EMC… well, I’m as surprised as you are!) But I do know the results to be true.

If you want to discuss these details further, reach out to me directly (christopher.kusek@emc.com) – or talk to your local TC (Or your TC, TC Manager and me in a nicely threaded email ;)) – They understand this rather implicitly.. I’m just a conduit to ensure you folks in the community are aware of what is available to you today!

Good luck, and if you can find a way to make the calculations look terrible – Let me know… I’m failing to do that so far :)

!UPDATE! !UPDATE! !UPDATE! :) I was informed apparently every thing is not as it seems? (Which frankly is a breath of relief, whew!)

Latest news on the street is, apparently there is a bug in the NetApp Efficiency Capacity Calculator – So after that gets corrected, things should start to look a little more accurate, let me breathe a sigh of relief around that, because apparently (after being heavily slandered for ‘cooking the numbers’) the only inaccuracy going on there [as clearly documented] was in the source of my data.

However, being that I’m not going to go through and re-write everything I have above again, I wanted to take things down to their roots, lets get down into the dirt, the details, the raw specifics so to speak. (If any thing in this chart below is somehow misrepresented, inaccurate or incorrect, please advise – This is based upon data I’ve collected over time, so hash it out as you feel :))

| NetApp Capacity | GB | TB | EMC Capacity | GB | TB | GB Diff | TB Diff | % Diff | |

| Parity Drives | 4000 | 3.91 | Parity Drives | 4000 | 3.91 | 0 | 0 | ||

| Hot Spares | 1000 | 0.98 | Hot Spares | 1000 | 0.98 | 0 | 0 | ||

| Right Sizing | 3519 | 3.44 | Right Sizing | 1822.7 | 1.78 | 1696.3 | 1.66 | ||

| WAFL Reserve | 2045.51 | 2 | CLARiiON OS | 247.87 | 0.24 | 1797.64 | 1.76 | ||

| Core Dump Reserve | 174.35 | 0.17 | Celerra OS | 60 | 0.06 | 114.35 | 0.11 | ||

| Aggr Snap Reserve | 863.06 | 0.84 | 0 | 0 | 863.06 | 0.84 | |||

| Vol Snap Reserve – 20% | 3279.62 | 3.2 | Check/Snap Reserve 20% | 3973.89 | 3.88 | -694.27 | -0.68 | ||

| Space Reservation | 0 | 0 | 0 | 0 | 0 | 0 | |||

| Usable Space | 13118.5 | 12.8 | Usable Space | 16895.54 | 16.49 | -3777.04 | -3.69 | +23% | |

| Raw Capacity | 28000 | 27.34 | Raw Capacity | 28000 | 27.34 | 0 | 0 |

What I’ve done here is take the information and tried to ensure each one of these apples are as SIMILAR as possible.

So you don’t have to read between the lines either, let me break down this configuration – This assumes 28 SATA 1TB Disks, with 4 PARITY drives and 1 SPARE – in both configurations.

If you feel that I somehow magically made numbers appear to be or do something that they shouldn’t – Say so. Use THIS chart here, don’t create your own build-a-config workshop table unless you feel this is absolutely worthless and that you truly need that to be done.

You’ll notice that things like Parity Drives and Hot Spares are identical (As they should be) Where we start to enter into discrepancy is around things like WAFL Reserve, Core Dump Reserve and Aggr Snap Reserve – Certainly there are areas of overlap as shown above and equally the same can be said of areas of difference, which is why in those areas on the EMC side I use that space to define the CLARiiON OS and the Celerra OS. I did have the EMC Match the default NetApp Configuration of a 20% vol snap reserve (on the EMC side I call it Check/Snap Reserve) [Defaults to 10% on EMC, but for the sake of solidarity, what’s 20% amongst friends, right?] (On a side note, I notice that my WAFL Reserve figures might actually be considerably conservative as a good friend gave me a dump of his WAFL Reserve and the result of his WAFL Reserve was 1% of total v raw compared to my 0.07% calculation I have above, maybe it’s a new thing?)

So, this is a whole bunch of math.. a whole bunch of jibber jabber even so to speak. But this is what I get when I look at RAW numbers. If I am missing some apparent other form of numbers, let it be known, but let’s discuss this holistically. Both NetApp and EMC offer storage solutions. NetApp has some –really- cool technology. I know, I worked there. EMC ALSO has some really cool technology, some of which NetApp is unable to even replicate or repeat. But before we get in to cool tech battles, as we sit in a cage match watching PAM duel it out with FAST-Cache, or ‘my thin provisioning is better than yours’ grudge matches. We have two needs we need to account for.

Customers have data that they need to protect. Period.

Customers have requirements of a certain amount of capacity they expect to get from a certain amount of disks.

If you look at the chart closely, there are some OMFG ICANTBELIEVEITSNOTWAFL features which NetApp brings to bear, however they come at a cost. That cost seems to exist in the form of WAFL Reserve, and Right sizing (I’m not sure why the Right Sizing is coming in a considerably fat consideration when contrasted with how EMC does it, but it apparently is?) So while I can talk all day long about each individual specific feature NetApp has, and equivalent parity which EMC has in that same arena; I need to start somewhere. And strangely going back to basics, seems to come to a 23% realized space savings in this scenario (Which seems inline with the EMC Unified Storage Guarantee) Which frankly, I find to be really cool. Because like has been resonated by others commenting on this ‘guarantee’, what the figures appear to be showing is that the EMC Capacity utilization is more efficient even before it starts to get efficient (through enabling technologies).

Obviously though, for the record I’m apparently riddled with Vendor Bias and have absolutely no idea what I’m talking about! [disclaimer: I have no idea what I’m talking about when I define and disclose I am in this post and others ;)] However, I’d like to go on record based upon these mathematical calculations, were I not an employee of EMC, and whether I did or did not work for NetApp in the past, I would have come to these same conclusions independently when presented with these same raw figures and numerical metrics. I continue to welcome your comments, thoughts and considerations when it comes to a Capacity bound debate [Save performance for another day, we can have that battle out right ;)] Since this IS a Pureplay CAPACITY conversation.

I hope you found this as informative as I did taking the time to create, generate, and learn from the experience of producing it. Oh, and I hope you find the (unmoderated) comments enjoyable. :) I’d love to moderate your comments, but frankly… I’d rather let you and the community handle that on my behalf. I love you guys, and you too Mike Richardson even if you were being a bit snarky to me. {Hmm, a bit snarky or a byte snarky… Damn binary!} Take care – And Thank you for making this my most popular blog-post since Mafia Wars and Twitter content! :)

The latest update to this is included here in the Final Reprise! EMC 20% Unified Storage Guarantee: Final Reprise

![clip_image005[4] clip_image005[4]](http://www.pkguild.com/wp-content/uploads/2010/05/clip_image0054_thumb.png)

![clip_image007[4] clip_image007[4]](http://www.pkguild.com/wp-content/uploads/2010/05/clip_image0074_thumb.png)

![clip_image008[4] clip_image008[4]](http://www.pkguild.com/wp-content/uploads/2010/05/clip_image0084_thumb.png)

![clip_image009[4] clip_image009[4]](http://www.pkguild.com/wp-content/uploads/2010/05/clip_image0094_thumb.png)

![clip_image011[4] clip_image011[4]](http://www.pkguild.com/wp-content/uploads/2010/05/clip_image0114_thumb.png)

Tweets that mention EMC 20% Unified Storage Guarantee !EXPOSED! | Christopher Kusek, Technology Evangelist -- Topsy.com

[…] This post was mentioned on Twitter by Christopher Kusek and Chuck Hollis, EMC Chicago. EMC Chicago said: RT @cxi: {blog} EMC 20% Unified Storage Guarantee !EXPOSED! http://bit.ly/aMDzc7 […]